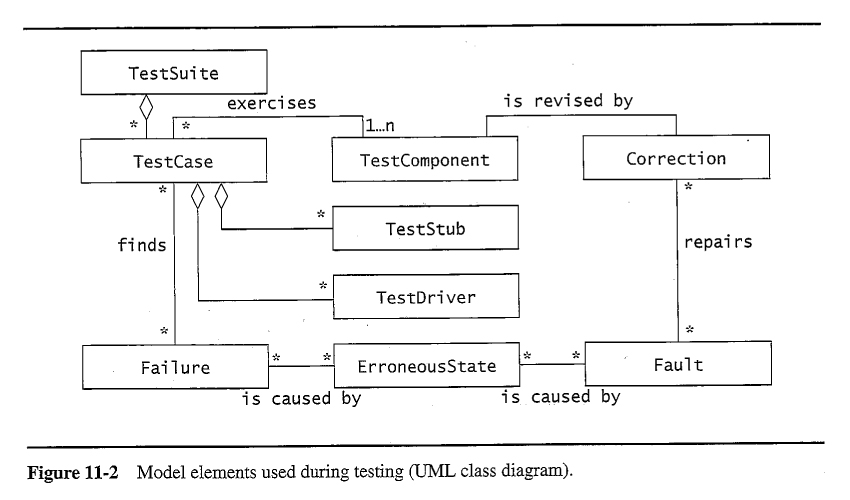

11.3.1 - Faults, Erroneous States, and Failures

11.3.2 - Test Cases

11.3.3 - Test Stubs and Drivers

- Test Stubs simulate the behavior of units called by the unit being tested.

- Test Drivers simulate the behavior of units that call the unit being tested.

11.3.4 - Corrections

Correcting one fault can often introduce new faults. The following techniques can help reduce this problem:

- Problem Tracking involves keeping track of what faults have been found, the actions taken to correct them, what unit(s) were affected by the corrections, etc. This is covered in further detail in Chapter 13 on configuration management.

- Regression Testing involves the re-execution of previous tests following a change, to identify any new faults that may have been introduced as part of the correction.

- Rationale Maintenance documents the rationale for any changes as well as the rationale for the original design and implementation. Having this knowledge decreases the number of new faults introduced in the process of correcting other faults.

11.4.1 - Component Inspection

- Inspections involve rigorous procedures for examining artifacts ( code, documents, design models, etc. ) for faults.

- Many studies have found that inspections are more effective at finding faults than testing. ( However neither is sufficient by itself. )

- Formal inspection procedures often involve inspection teams consisting of 3 to 4 members, generally not including the developer who produced the artifact, ( other than as a "witness", allowed to speak only when asked a direct question. )

- Inspections compare the specifications against the artifacts.

- Inspections often rely upon standardized checklists of items to inspect, and standards quality documents describing how things are supposed to be done.

- One benefit of inspections is that they can be applied to unfinished code and other artifacts that cannot be "executed" for testing purposes.

- Fagan's method consists of 5 steps:

- Overview

- Preparation

- Inspection Meeting

- Rework

- Follow-up

- Approaches differ in the amount of work done during the preparation phase versus the meeting phase.

- This book does not cover inspections to the extent that most software engineering texts do.

The following four excerpts from "Software Testing and Analysis" by Pezze and Young illustrate the types of checklists and detailed descriptions used for inspections:

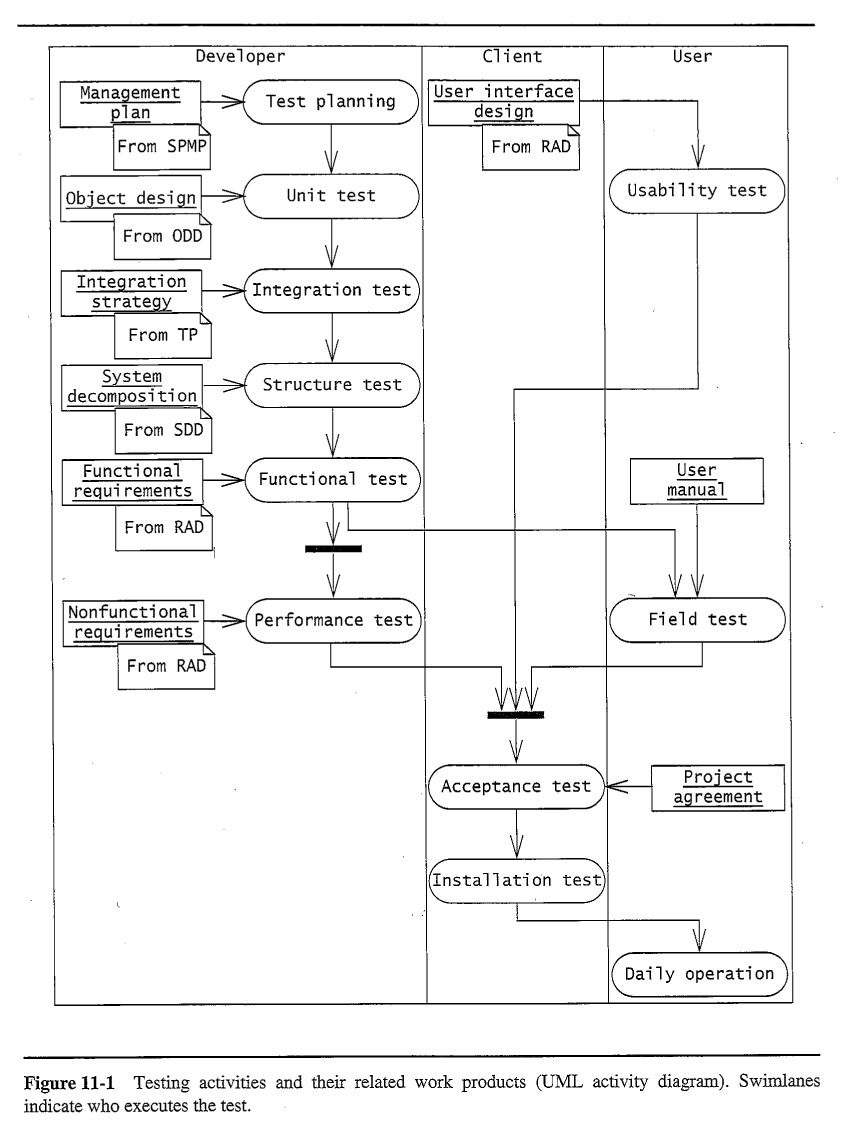

11.4.2 - Usability Testing

- Usability testing is concerned with two factors:

- The user interface, including how efficiently users can accomplish tasks, how quickly they learn to use the system, and the rate at which they make mistakes.

- Agreement between what the user expects the system to do and what it actually does. ( Validation. )

- Three types of usability tests:

- Scenario test, in which users are asked to act through a particular scenario using a prototype of the system. This is often done using paper mock-ups very early in the development process.

- Prototype test, in which a ( software ) prototype of the system is used.

- Horizontal prototypes implement a single layer in the system, such as the user interface layer.

- Vertical prototypes implement all the functionality required to execute a particular use-case.

- A Wizard of Oz prototype incorporates a human to provide the system response behind the prototyped user interface.

- Product test, in which the completed product is evaluated by a small group of test subjects.

- Alpha test is conducted in-house, with carefully selected test subjects, often company employees.

- Beta test is conducted by real customers at their sites. Beta tests may or may not be restricted to carefully selected subjects.

11.4.3 - Unit Testing

Testing of individual units, such as a class or even an individual method.

- White box testing takes advantage of knowledge of the internal structure of the unit being tested.

- Black box testing is designed solely based on the specifications of the unit, without any knowledge of its internal workings.

Experimental Design

- Whether testing widgets coming off an assembly line, scientific phenomena in nature, or software, one can never test every single item or location for every possible property of interest.

- Every test costs something, in terms of time, effort, and often consumed resources. There is never enough money in the budget or time in the schedule to test everything.

- Some tests are destructive, which usually means that they destroy the thing being tested. ( Sometimes they destroy other things. )

- Some things just can't be tested, such as redundant safety systems or systems designed to respond only to very rare circumstances.

- As a result, there is a whole field of study dealing with how to plan a series of experiments, so as to gain the greatest possible insight with the fewest number of experimental data points.

- The general idea is to try and span as much of the total possible test space with as few measurements as possible.

- In certain circumstances it may be desirable to have a higher concentration of tests in areas of particular interest, at the expense of having fewer tests in other areas.

- For example in software testing one generally wants more tests in areas where faults are more likely to occur, in parts of the program that will see the most use in practice, and in areas were the consequences of faults are the highest ( critical areas. )

Percentage Coverage

- One way of measuring the coverage of a particular test or test suite is to look at the ratio of the amount of test space covered by the test ( suite ) divided by the total amount of test space, with different criteria used for measuring the "test space".

- For example statement testing would look at the number of statements executed during a particular test ( suite ) divided by the total number of statements in the code being tested. This is a particularly easy coverage to measure, because profilers will report the number of times each line of code is executed during a run, and how many lines were not executed at all.

- There are certain cases where percentage coverage cannot be measured, because the denominator is infinite. For example, if a program contains a loop there may be an infinite number of possibiliites for how many times the loop is executed before continuing on to the following code.

Equivalence testing

- It is never possible to test every possible input value for every function.

- For example, a function that adds two integers has 2^32 possible values for each of its two input variables, for a total of 2^64 possible input combinations.

- Equivalence testing defines ranges of variable input values for which all tests are expected to yield equivalent results, i.e. negative numbers, zero, positive integers, numbers larger than 32768, etc.

- Then the idea is to select test input from each equivalence class, ( and to the extent possible from all combinations of equivalence classes ), instead of every possible input value.

Boundary testing

Values on the "edges" or boundaries of equivalence ranges are most likely to cause faults. For example, leap years have special rules for years divisible by 100 or 400. ( Empty strings are often taken as a special or boundary case. )

Path testing

- Path testing strives to test every path through the flow chart in at least one test case.

- Percentage coverage on a path testing basis will measure the number of paths covered divided by the total number of paths.

- Path testing is more comprehensive than statement testing, particularly in the case where one branch of a decision does not contain any statements, e.g. an "if" without an "else" can test every statement with only a "true" branch test, but path testing would require testing both the "true" and "false" branchs.

- Path testing is generally based on flow charts of the code being tested.

Branch Testing

- Branch testing is very similar to path testing, in that it attempts to follow both the true and false branches of every condition test in the code ( and every case of every switch statement. )

- Like path testing, branch testing is more comprehensive than simple statement testing, because it follows empty branches as well as those containing statements.

- Percentage coverage of branch testing would measure the number of branchs covered by the tests divided by the total number of possible branchs.

Condition Testing

- Condition testing strives to test both the true case and the false case for every condition evaluation in the program, which is more comprehensive than simple branch testing when compound conditions are present.

- Consider for example the line of code:

if( month == 4 || month == 6 || month == 9 || month == 11 )

- Branch testing would only need two tests for the above - One in which the compound test evaluates to true and one in which it evaluates to false.

- Condition testing would evaluate both the true and false cases for each of the four cases, preferably in combinations where each condition determines the result of the overall compound statement.

State-Based Testing

- Ensure that every state transition is tested by at least one test case.

- May require knowing and setting the internal state of objects, which poses a problem in the face of information hiding and encapsulation.

- Percentage coverage would measure the number of state transitions tested divided by the total number of possible state transitions.

Polymorphism testing

All possible bindings need to be tested for each method that can be sent. ( And sometimes all combinations of bindings. )

Sample Unit Testing

The followng are sample solutions to old exam questions on testing. They are somewhat more complete that what is really expected of students taking an exam, but more appropriate for inclusion in a report.

11.4.4 - Integration Testing

After units are tested individually, then combinations of units must be tested, ( focusing primarily on the interfaces between the units. )

Horizontal integration testing strategies

- Assumes that the architecture has a layered structure.

- The big bang approach tests the entire system once the unit tests are complete.

- Bottom-up testing first integrates units at the bottom level of the hierarchy, and works up.

- Top-down testing integrates from the top level first, working down.

- Sandwich Testing combines bottom-up and top-down testing. First the top and bottom layers are unit tested, and then they are integrated with the middle or "target" layer in parallel. Target layer units are not individually unit tested.

- Modified Sandwich Testing unit tests all layers before commencing integration testing.

Vertical integration testing strategies

Focus on early testing and integration of all units necessary to provide partial functionality of the product.

11.4.5 - System Testing

Functional testing

Tests functional requirements, as outlined in use-cases.

Performance testing

Tests non-functional requirements, as outlined in the requirements documents:

- Stress testing tests the system under heavy loads.

- Volume testing tests the system with large volumes of data, such as large files or databases.

- Security testing, often involves tiger teams.

- Timing testing tests timing constraints.

- Recovery testing tests the systems ability to recover from erroneous states.

Pilot testing

Testing done in the field by a select group of test subjects, e.g. alpha and beta tests.

Acceptance testing

Testing performed by the client, in the development environment.

Installation testing

Testing performed after the system has been installed at the client's site.

11.5.1 - Planning Testing

11.5.2 - Documenting Testing

Four types of testing documents:

- Test Plans cover most of the managerial aspects, such as testing schedules, budgets, and the allocation and scheduling of necessary resources.

- Test Specifications document the specific details of individual tests. Details include input data, output oracles, pass/fail criterion, time required, location of relevant files, associated requirements, and references to other documents as appropriate.

- Test Incident Reports document a specific execution of a specific test. Includes who conducted the test, when the test was done, and the results of the tests.

- Test Report Summary documents all of the failures found by all of the tests.

11.5.3 - Assigning Responsibilities

- Testing is best performed by someone other than the developer who developed the artifact being tested, particularly beyond the unit testing level.

- Some organizations have entire testing teams / departments.

- Incentives must be provided for finding faults, without penalizing those who produced them.

11.5.4 - Regression Testing

- Any changes to the system, such as fault corrections,design changes, or the introduction of new features or functionality, may cause the introduction of new faults into units that had already been tested.

- Re-testing of affected units is important, but it is generally not possible to re-test every affected unit every time a change is made.

- Selection of units to be re-tested may follow the following guidelines:

- Retest dependent units. Subsystems that depend on a changed unit are most likely to break after the changes are made.

- Retest risky use cases, i.e. the ones most likely to cause problems and the ones with the largest potential risk of damages.

- Retest frequent use cases. Those cases most frequently executed are most likely to encounter a problem eventually.

11.5.5 - Automating Testing

11.5.6 - Model-Based Testing

The following material is excerpted from "Software Testing and Analysis - Process, Principles, and Techniques", by Pezze and Young. It is a required textbook when I teach CS 442, Software Engineering II.

- Sensitivity: The degree to which a test can detect error conditions. High sensitivity is good.

- Redundancy: Checking for consistency whenever the same intent is expressed in more than one way, e.g. type checking.

- Restriction: Sometimes it is easier and more efficient to check a more restrictive condition than the actual desired condition.

- Partition: Dividing a large complex problem into smaller parts that can be attacked individually, e.g. unit testing and then integration testing.

- Visibility: The extent to which the testing process and progress towards goals is visible to all involved.

- Feedback: Adjusting testing procedures based on results from past projects. E.g. "Every time you find a bug, write a test that would have caught that bug had it been run.", or creating new checklists based on bugs commonly encountered before.

- Definition-use pairs look at the places where variables are given values ( definitions ), and where those values are used ( uses ), and all the paths that lead from each possible definition to each possible use.

- Data flow analysis looks at the flows of information from inputs to outputs, and all the code along the way.

- Adequacy criteria has been discussed above as "percent coverage".

- Some criteria are more rigorous than others. For example, condition testing is more rigorous than branch testing, which is more rigorous than statement testing.

- Combinatorics look at all possible options ( categories ) for each input variable, and attempt to have at least one test covering each combination of categories. For example if there were two equivalence partitions for the first variable, three for the second and five for the third, a total of 30 different tests would be called for.

- In general full combinatorics is not practical, so an alternative approach is to at least cover every possible pair of categories. In the above example, there are 2*3 = 6 pairs for the first two variables, 3*5=15 for the second and third, and 2*5=10 for the first and third. However some of these pairs can be combined, reducing the number of tests needed below 30, ( possibly as low as 15. )

- See above.

- See above.

- Testing OO software is complicated by information hiding and polymorphism, requiring special testing techniques.

- One option is to include self-testing code in each class, but that poses a dilemma:

- If the testing code is left in when the product ships, it is more complicated ( and hence has more opportunities for faults ) than it needs to be.

- If the testing code is removed before distribution, then the code that is distributed is not the same code that was tested.

- Scaffolding is a support structure, used ( in this context ) to support the testing process.

- Oracles are means of knowing what the "correct answers" for each test should be. Possible oracles include hand calculations, previous versions of the program, early prototypes, competing products, practical measurement or experimentation, or other tools that can generate the answers such as Excel or Matlab.

- See above for a full discussion of inspections.

- Pair programming is an agile development method in which one programmer continuously monitors another as the code is being developed, essentially performing a real-time inspection to ensure high quality.

The following material is excerpted from "Software Engineering 8", by Ian Sommerville. It is an alternate textbook that has been used in CS 440 in past semesters.

Sommerville's inspection process involves two meetings - One to get an overview understanding of the artifact, presented by the author, and another to review the findings of the inspection process:

Like Pezze, Sommerville breaks inspection checklists down into categories:

The format of Sommerville's test plan document is similar to our author's: